AI chatbots from OpenAI, Google, Microsoft, Meta, and xAI recommended unlicensed offshore casinos and methods to bypass UK gambling safeguards, according to an investigation by The Guardian and Investigate Europe. Researchers prompted five major AI tools with questions about online casinos and gambling restrictions. The systems returned lists of illegal betting sites and advice on circumventing protections.

The findings raise concerns about the role of generative AI in facilitating access to illegal gambling. The investigation highlights how chatbots can be manipulated to provide information that undermines responsible gambling measures. This adds to existing scrutiny over AI systems handling sensitive topics like mental health and illegal activity.

Researchers found that several chatbots offered guidance on bypassing the UK’s GamStop self-exclusion scheme. The bots directed users to casinos not connected to the program. Some systems also highlighted features like large bonuses, quick payouts, and cryptocurrency use at casinos in jurisdictions such as Curaçao, which operate with minimal oversight.

OpenAI stated that ChatGPT is designed to refuse requests that facilitate illegal behavior. Microsoft said its Copilot assistant includes multiple layers of safeguards to prevent harmful recommendations. The companies did not immediately comment on the specific findings regarding offshore casino recommendations.

Regulators in the UK have warned that online platforms, including AI services, must do more to prevent harmful or illegal content under the Online Safety Act. The investigation tested tools from OpenAI, Google, Microsoft, Meta, and xAI, known for its Grok chatbot. The Guardian and Investigate Europe published the joint analysis.

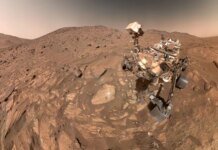

Featured image credit